Dilated Spatial Generative Adversarial Networks for Ergodic Image Generation

Résumé

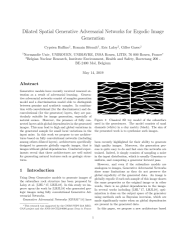

Generative models have recently received renewed attention as a result of adversarial learning. Generative adversarial networks consist of samples generation model and a discrimination model able to distinguish between genuine and synthetic samples. In combination with convolutional (for the discriminator) and de-convolutional (for the generator) layers, they are particularly suitable for image generation, especially of natural scenes. However, the presence of fully connected layers adds global dependencies in the generated images. This may lead to high and global variations in the generated sample for small local variations in the input noise. In this work we propose to use architec-tures based on fully convolutional networks (including among others dilated layers), architectures specifically designed to generate globally ergodic images, that is images without global dependencies. Conducted experiments reveal that these architectures are well suited for generating natural textures such as geologic structures .

Fichier principal

Dilated_Spatial_Generative_Adversarial_Networks_for_Ergodic_Image_Generation (1).pdf (720.71 Ko)

Télécharger le fichier

main.pdf (717.95 Ko)

Télécharger le fichier

Dilated_Spatial_Generative_Adversarial_Networks_for_Ergodic_Image_Generation (1).pdf (720.71 Ko)

Télécharger le fichier

main.pdf (717.95 Ko)

Télécharger le fichier

Origine : Fichiers produits par l'(les) auteur(s)

Loading...